Nvidia announced today that it is releasing a family of basic AI models called Cosmos that can be used to train humanoids, industrial robots and self-driving cars. While language models learn to generate text by training on copious amounts of books, articles, and social media posts, Cosmos is designed to generate images and 3D models of the physical world.

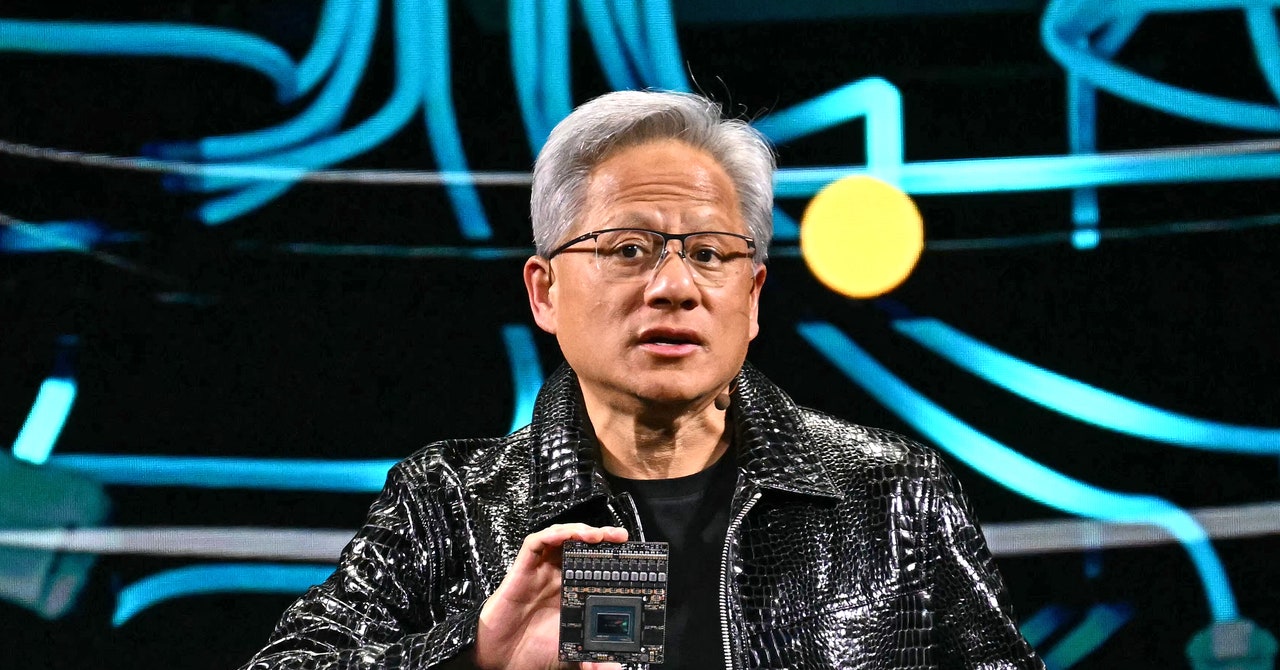

During a keynote presentation at the annual CES conference in Las Vegas, Nvidia CEO Jensen Huang showed examples of Cosmos being used to simulate activities inside warehouses. Cosmos was trained on 20 million hours of real footage of “people walking, hands moving, manipulating things,” Jensen said. “It’s not about generating creative content, but about teaching the AI to understand the physical world.”

Researchers and startups hope these kinds of basic models can give robots used in factories and homes more sophisticated capabilities. For example, Cosmos can generate realistic video footage of boxes falling from shelves inside a warehouse, which can be used to train a robot to recognize accidents. Users can also fine-tune the models using their own data.

A number of companies are already using Cosmos, Nvidia says, including humanoid robot startups Agility and Figure AI, as well as self-driving car companies like Uber, Waabi and Wayve.

Nvidia also announced software designed to help different kinds of robots learn to perform new tasks more efficiently. The new feature is part of Nvidia’s existing Isaac robot simulation platform, which will allow robot builders to take a small number of examples of a desired task, such as grasping a specific object, and generate large amounts of synthetic training data.

Nvidia hopes that Cosmos and Isaac will appeal to companies that want to build and use humanoid robots. Jensen was joined on stage at CES by life-size images of 14 different humanoid robots developed by companies including Tesla, Boston Dynamics, Agility and Figure.

Along with Cosmos, Nvidia also announced Project Digits, a $3,000 “personal AI supercomputer” that can run a large language model of up to 200 billion parameters without the need for cloud services from AWS or Microsoft. It also announced its long-awaited next-generation RTX Blackwell GPUs and incoming software tools to help build AI agents.